User Tools

Assistive Mobile Manipulation Pilot

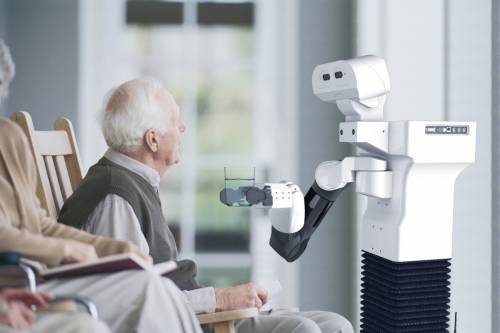

This pilot focuses specifically on robotic health-care adaptation. It showcases the development and programming of assistive mobile robots in unstructured and dynamic environments where the robot has to perform complex tasks combining several capabilities such as mobility, perception, navigation, manipulation and human-robot interaction. Furthermore, these capabilities have to be customised for an individual and for a specific apartment. The key to the success on these changeable environment is to encapsulate the different functionalities at design time, to combine them at deployment time and to leave them interact at run-time.

The system addressed in this Pilot is a TIAGo mobile base manipulator working in a standard apartment.

This pilot demonstrates:

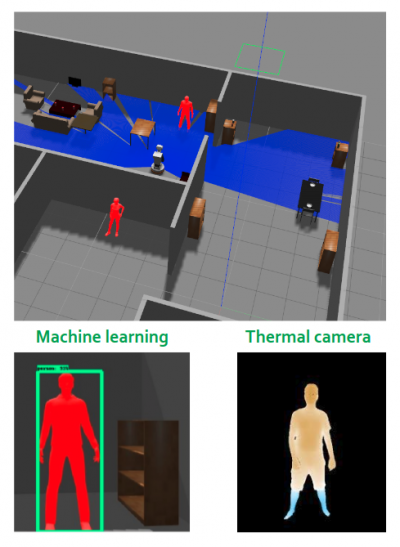

- Replacement of component(s): the System Builder wants to exchange components with the same functionality. The demonstrator prepared consist of an application that detects people using different combinations of software and hardware components. Like for example exchanging the component of the RGBD camera that uses deep learning to detect people with another component that is represented by the thermal camera that doesn’t use the deep learning techniques but that is more expensive.

- Composition of components: the System Builder wants to create a new TIAGo robot checking via the data sheet (in the form of a digital model) whether the new building block (the interface) fits into the system given the constraints of the system and the variation points of the building block.

- Task Coordination for Object Detection: the Behaviour Developer wants to prepare the coordination of the system components to look for standard objects in an apartment.

Pilot Skeleton Resources and Software

The setup consists of a TIAGo assistive mobile manipulator robot navigating in private apartment to assist the person living in the flat. Base functionality such as object and people detection, arm control, as well as navigation and an exemplary human-robot interaction interfaces, are available for contributors to extend, modify or build upon. The TIAGo robot consists of a wheeled mobile manipulator equipped with odometry, a laser scan, a depth camera, and a thermal sensor. The different capabilities can be tested in simulation and on the real robot. For people detection, two different algorithms, using two different sensors can be swapped or combined:

- RGB-D camera + Deep learning people detection

- Thermal camera + OpenCV people detection

The idea is to choose the right combination to fulfil the requirements.

The intended application of this mobile manipulator is to assist people in a private apartment, so potential use-cases could be: creating assistive applications for TIAGo (welcome visitors to the apartment, finding objects in the apartment, delivering items to the elderly person, …); extend the pilot skeleton with components related to specific fields like HRI, object/people recognition, manipulation. The interface with the pilot is possible on different levels (from component to task level).

The pilot resources can be downloaded and executed on any computer via docker container, which includes everything needed to run the TIAGo simulation and the SmartMDSD Toolchain. To speed up the development time, the components are bridges made as ROS interfaces to access the legacy code existing for the TIAGo robot. It also shows how RobMoSys works finely with legacy code that have been previously implemented, even if the RobMoSys benefits will be narrowed by the capacities and the design of the existing code. Nonetheless, some components may be replaced with native RobMoSys components.

The resources access will be granted specifically to the pilot developers, on demand. A ready-to-run container is available, the TIAGo docker (including Linux Ubuntu, SmartMDSD Toolchain, Eclipse IDE, Gazebo simulation, TIAGo ROS packages). All the instructions to build, run the dockers, run the demos and work on the pilot are reported in the readme files included in the resources repositories.

Software available:

- RobMoSys software components to use with SmartMDSD Toolchain

- TIAGo SmartMDSD repositories (navigation, SmartMDSD to ROS bridge ports, System TIAGo deployment)

- TIAGo ROS packages (manipulation, navigation and perception)

Extra RobMoSys Software Baseline:

- The pilot is related to the Gazebo/TIAGo/SmartSoft Scenario. It runs the TIAGo platform with the flexible navigation stack in the SmartSoft World.

http://www.robmosys.eu/wiki/pilots:assistive-manipulation